End of Life Notice

* Effective Date: 2025/07/15

The Next Wave for Edge AI Computing

with Coral Edge TPU™

In recent years, artificial intelligence (AI) has been applied in various industries and has changed the world. Edge AI has became a virtual role when customers spend more time and move services to local devices to increase privacy, security and minimize latency.

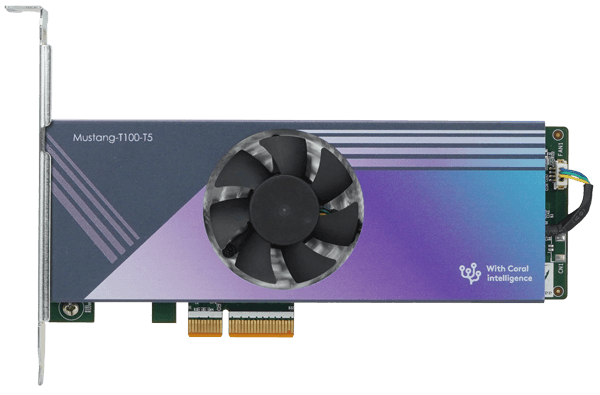

IEI’s Mustang-T100 leverages the powerful Coral technology, a complete and fast toolkit for building products with local AI, to bring AI developers getting into a different AI inference platform at the edge. The Mustang-T100 integrates five Coral Edge TPU™ co-processors in a half-height, half-length PCIe card, and offers well computing speed up to 20 TOPS and extremely low power consumption (only 15W). Moreover, powered by well-developed Tensorflow Lite community, it can smoothly and simply implement the existing model to your edge inference project. The Mustang-T100 is an ideal AI PCIe accelerator card for image classification, object detection and image segmentation.

Powerful Coral Edge TPU

High Computing Capability

Lower Power Consumption

Support Wide Temperature

Streamline Design

Solid but Delicate, High Computing but Low Power

Designed with five Coral TPU modules, the Mustang-T100 is able to offer high ML inferencing speed up to 20 TOPS in one time and consume 15W only. In order to achieve running every AI tasks in burrier-free environment, the Mustang-T100 can operate in wide temperature from -20 ºC – 55ºC.

15W

Very Low Power Consumption

Up to 20 TOPS

High ML Inferencing Speed

-20~55°C

Meet Any Robust Environment

Coral is Bring New Power for

On-device Intelligence Solutions

The Carol Edge TPU™ is capable of performing 4 trillion operations per second (4 TOPS), using only 2 watts of power—that‘s 2 TOPS per watt and can be connected over a PCIe Gen2 x1 or USB2 interface. The Coral's on-device ML acceleration technology helps developers build fast, efficient, and affordable solutions for the edge.

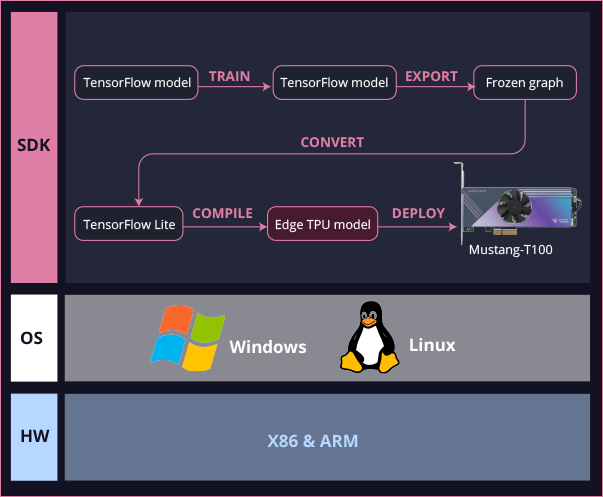

High Compatibility from The Start

To support diverse needs from IT or AI developers, the Mustang-T100 can be implemented in various operating systems, such as Linux and Windows, and also can be deployed in the X86 and Arm platform to accelerate and maximum edge AI performance. More, combined with TensorFlow Lite, no need to build ML training models from the ground up. TensorFlow Lite models can be compiled models to run on the Edge TPU completely.

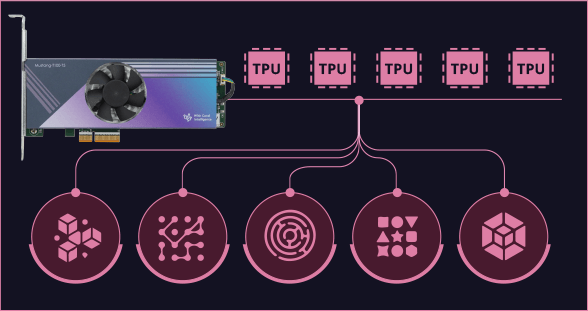

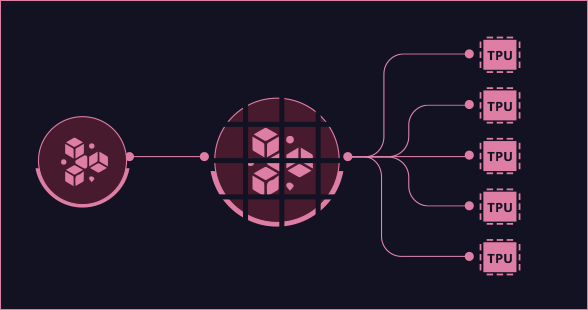

Multitasking or Pipelining, Select Your Inferencing Mode

For numerous AI applications at the edge, clients can select from two different modes to run your inferencing project depending on their needs.

Multitasking function to run each model in parallel

If you need to run multiple models, you can assign each model to a specific Edge TPU and run them in parallel at the same time for extreme computing performance.

Model pipelining to get faster throughput and low latency

For other scenarios that require very fast throughput or large models, pipelining your model allows you to execute different segments of the same model on different Edge TPUs. This can improve throughput for high-speed applications and can reduce total latency for large models.

Optimize Pre-trained Models at The Edge

The Mustang-T100 supports AutoML Vision Edge that AI developers can use Edge TPU to accelerate transfer-learning with a pre-trained model at the edge devices directly. The models can be optimized to increase model accuracy and save training time by just running fewer than 200 images.

Smarter Fan Solution

By installing Edge TPU driver, the TPU temperature can be detected automatically. It also supports smart fan trigger setting to enhance the reliability and durability of edge devices and stabilize the TPU computing performance.

Create AI Environment Simply

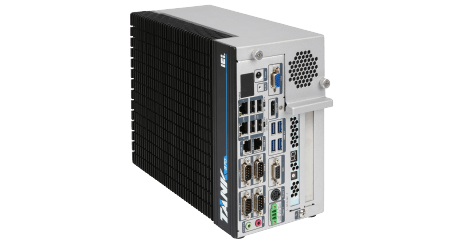

IEI provides rich AI-ready platforms, such as the TANK series,

the FLEX series and the DPRC series. All series are ideal for

deep learning inference computing to help you get faster,

deeper insights for your customers and your business. All IEI

AI-ready systems are able to support up to four*

Mustang-T100 to deploy and run your AI applications more

efficiency.

Application Field

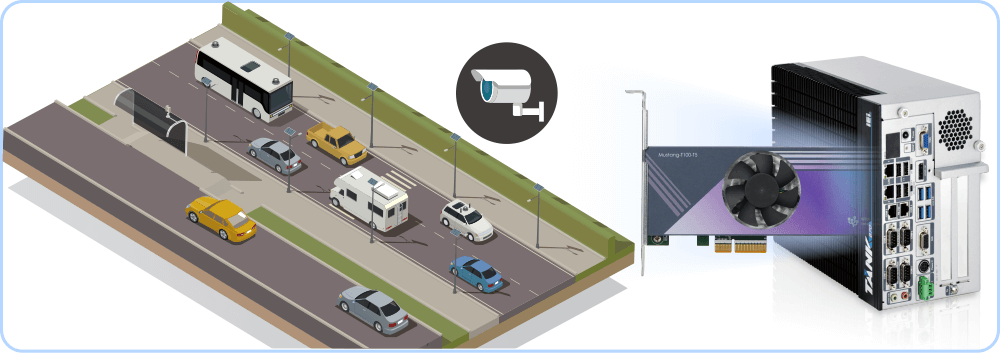

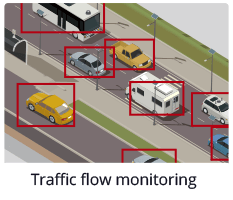

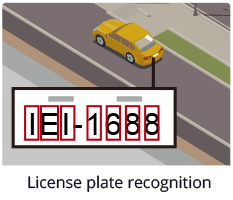

Intelligent Traffic Management

For the pedestrian safety and traffic efficiency, the AI can help traffic control center to monitor roads, crosswalk and bus stop for reacting traffic condition immediately.

The AI system is accelerated by the Mustang-T100 which is powered by Coral edge TPU. With 5 cores of TPU onboard, the system can afford more camera channels.

Reliable Campus Security

This case is using AI system on campus. Because the campus is large and requires a lot of cameras, it needs better computing performance.

By using the DRPC series with the Mustang-T100 as a solution to offer more AI workload, multiple algorithms and multiple channels of camera can be supported. Therefore, the customer used this system for face recognition, loitering alert, crowd management, people counting, and parking management.

Smarter Retail Management

This application is for people monitoring in a store. During the COVID-19 period, it is necessary to manage all guests to maintain social distancing, ensure customers wear mask correctly, and monitor numbers of people.

By combining the FLEX series with the powerful Mustang-T100 accelerator card, more camera channels can be deployed in a large site, such as department store or supermarket.

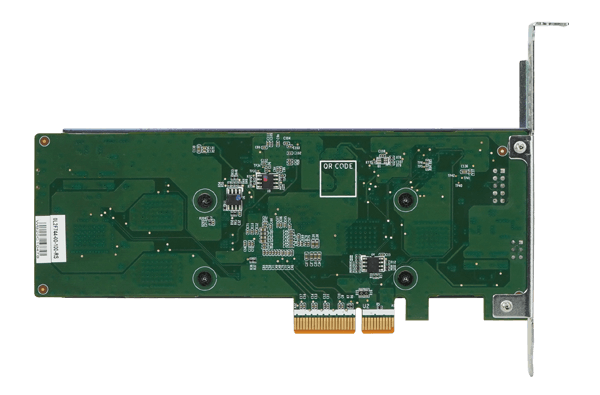

Hardware Spec.

| Form Factor | |

|---|---|

| Form Factor | Low-profile PCIe card |

| System | |

|---|---|

| CPU | 5 x Google Coral Edge TPU |

| Cooling method / System Fan | Active Fan |

| Physical Characteristics | |

|---|---|

| Dimensions (LxWxH) (mm) | 167.64 (L) x 64.41 (W) x 18 (H) |

| Environment | |

|---|---|

| Operating Temperature | -20°C ~ 55°C |

| Humidity | 5% ~ 90% RH, Non-condensing |

| Certifications | |

|---|---|

| Safety & EMC | RoHS |