End of Life Notice

* Effective Date: 2023/12/29

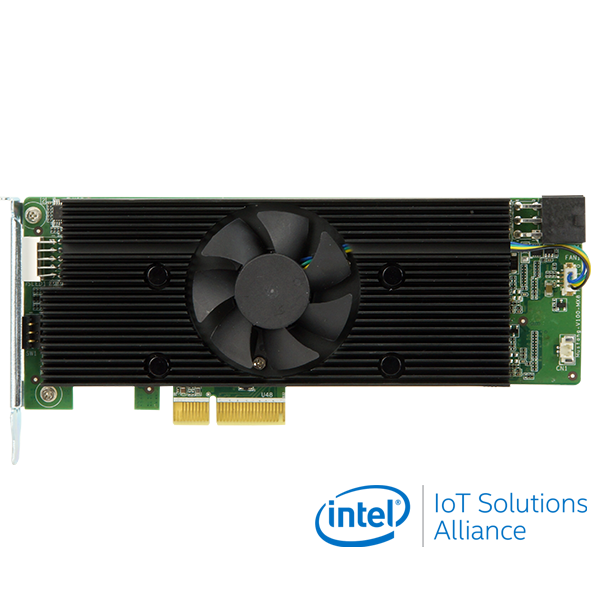

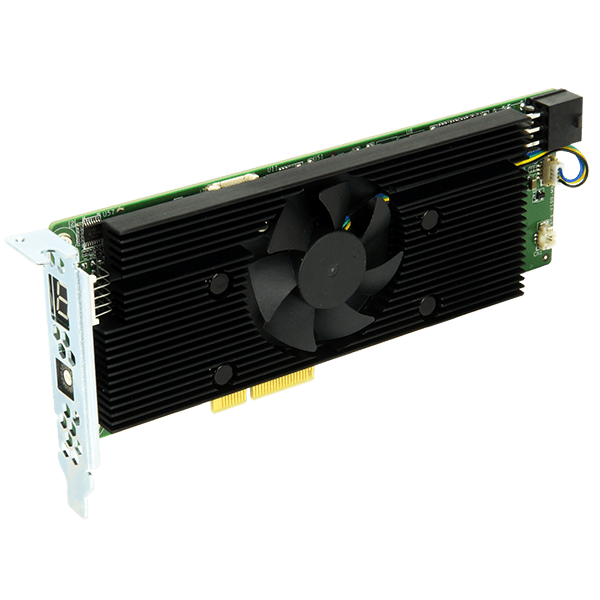

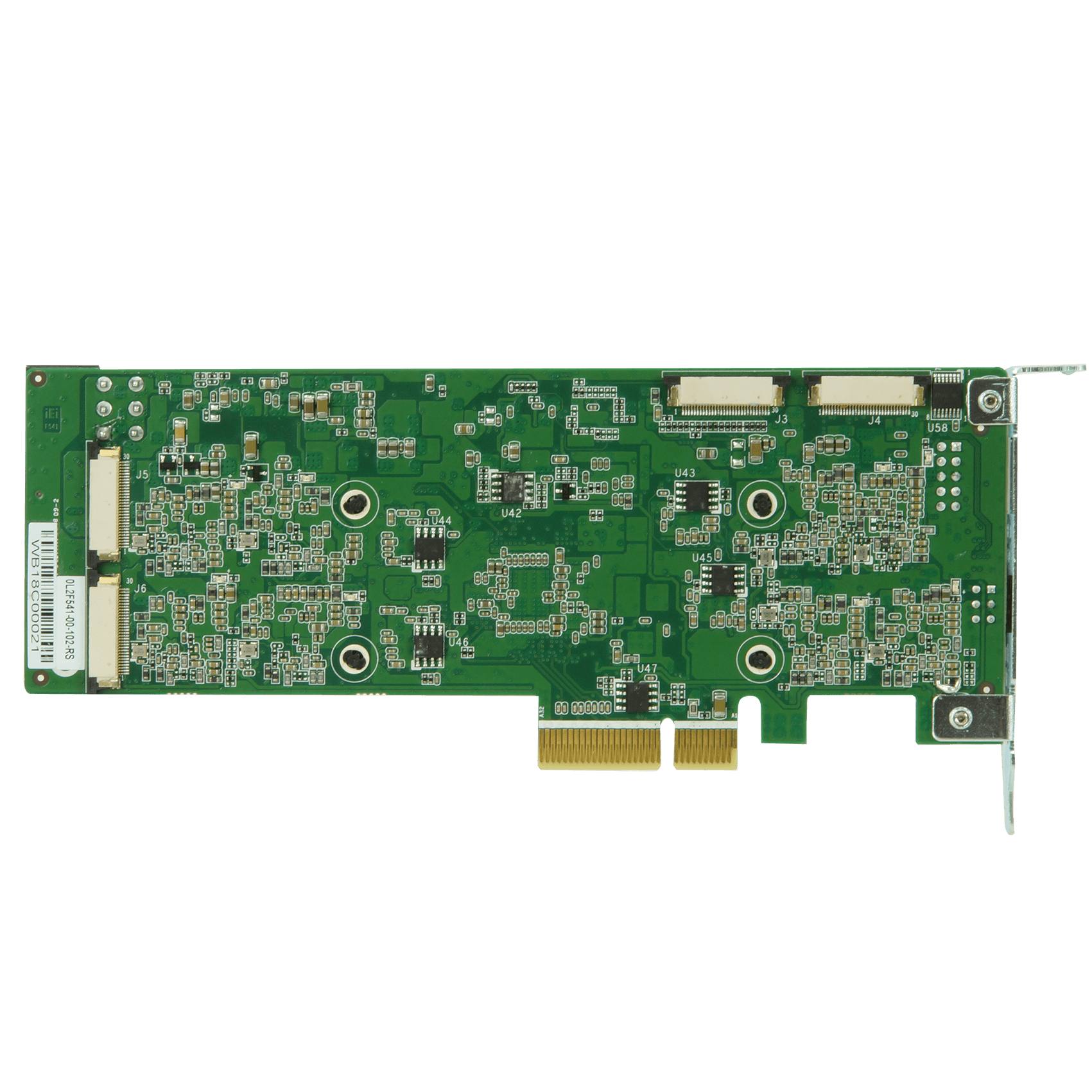

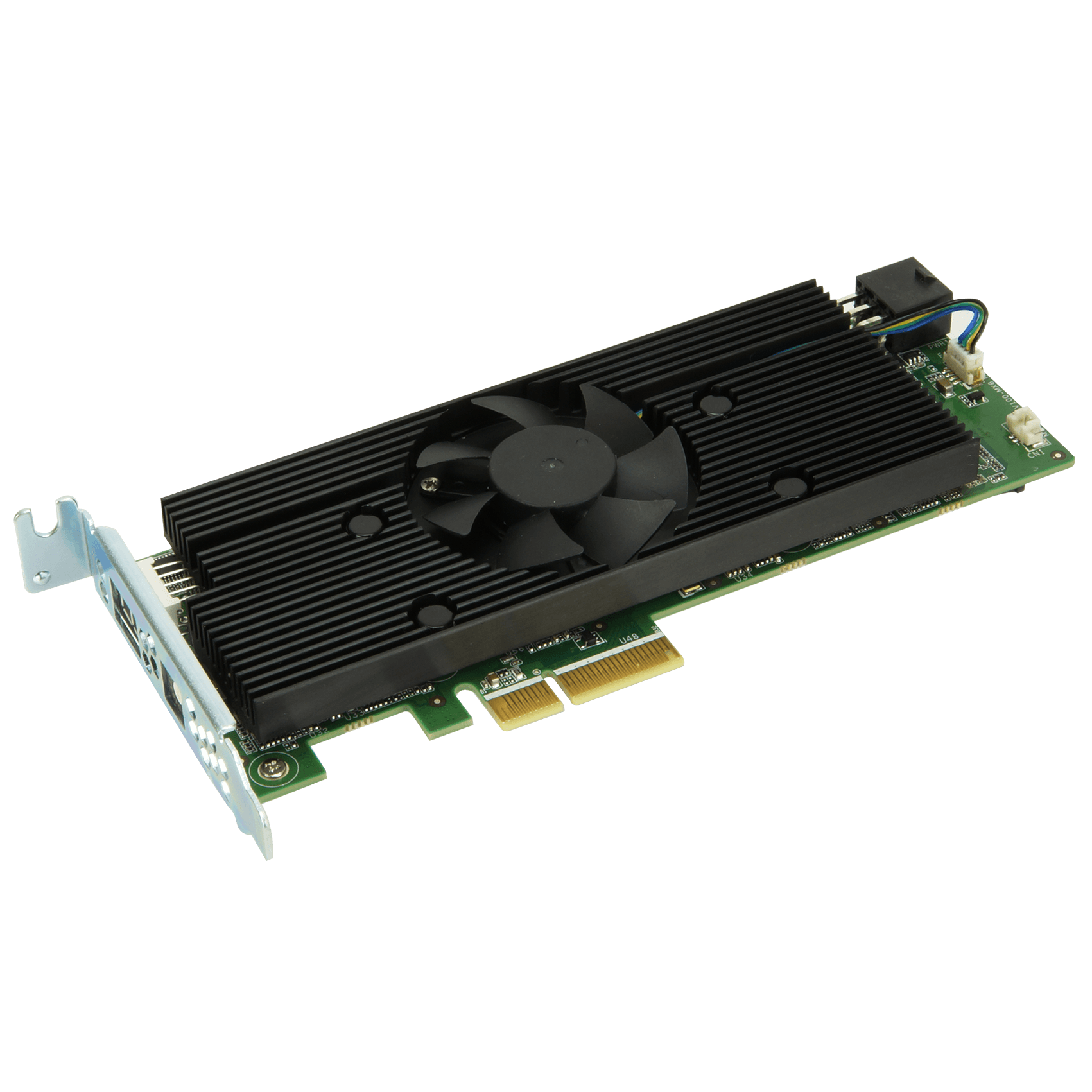

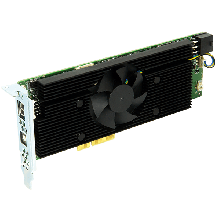

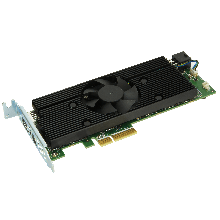

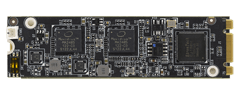

End of Life» Half-Height, Half-Length, Single-slot compact size

» Low power consumption ,approximate 25W

» Supported OpenVINO™ toolkit, AI edge computing ready device

» Eight Intel® Movidius™ Myriad™ X VPU can execute multiple topologies simultaneously.

Warning:

DO NOT install the Mustang-V100-MX8 into the TANK AIoT Dev. Kit before shipment. It is recommended to ship them with their original boxes to prevent the Mustang-V100-MX8 from being damaged.

In order to increase the compatibility with server grade systems, IEI recommend users to update Mustang-V100-MX8 firmware. If your product's serial number is before 2020 June (ex. Q205XXXXXX stands for 2020 May), please contact with IEI (IEI Technical Support Form), our team will help you to update it.

DO NOT install the Mustang-V100-MX8 into the TANK AIoT Dev. Kit before shipment. It is recommended to ship them with their original boxes to prevent the Mustang-V100-MX8 from being damaged.

In order to increase the compatibility with server grade systems, IEI recommend users to update Mustang-V100-MX8 firmware. If your product's serial number is before 2020 June (ex. Q205XXXXXX stands for 2020 May), please contact with IEI (IEI Technical Support Form), our team will help you to update it.

Hardware Spec.

| System | |

|---|---|

| Chipset | Eight Intel® Movidius™ Myriad™ X MA2485 VPU |

| Cooling method / System Fan | Active fan |

| Power | |

|---|---|

| Power Consumption | Approximate 25W |

| Environment | |

|---|---|

| Operating Temperature | -20°C ~ 60°C |

| Humidity | 5% ~ 90% |

| Card Slot Interface | |

|---|---|

| PCIe | x4 |

Resources

Product News

eCatalog