- Embedded Computer >

- Accelerator Card

- ...

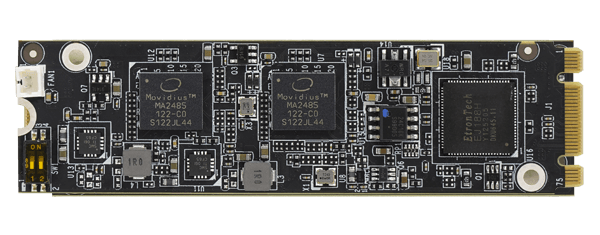

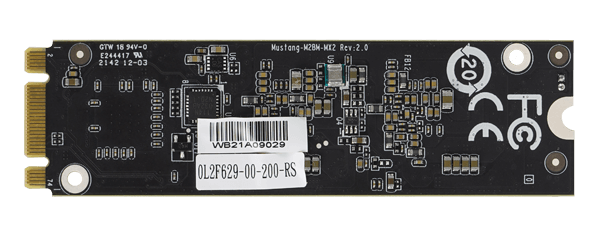

Mustang-M2BM

» M.2 BM key form factor (22 x 80 mm)

» 2 x Intel® Movidius™ Myriad™ X VPU MA2485

» Power efficiency, approximate 7W

» Operating Temperature -20°C~60°C (Tested in IEI FLEX-BX200)

» Powered by Intel's OpenVINO™ toolkit

Hardware Spec.

| System | |

|---|---|

| Chipset | 2 x Intel® Movidius™ Myriad™ X MA2485 VPU |

| Cooling method / System Fan | Active Heatsink |

| Physical Characteristics | |

|---|---|

| Dimensions (LxWxH) (mm) | 22 X 80 |

| Power | |

|---|---|

| Power Consumption | Approximate 7W |

| Environment | |

|---|---|

| Operating Temperature | -20°C ~ 60°C (Tested in IEI FLEX-BX200) |

| Humidity | 5% ~ 90% |

| Card Slot Interface | |

|---|---|

| M.2 | BM Key |

Resources

Product News

eCatalog