|

|

|

|

|

|

|

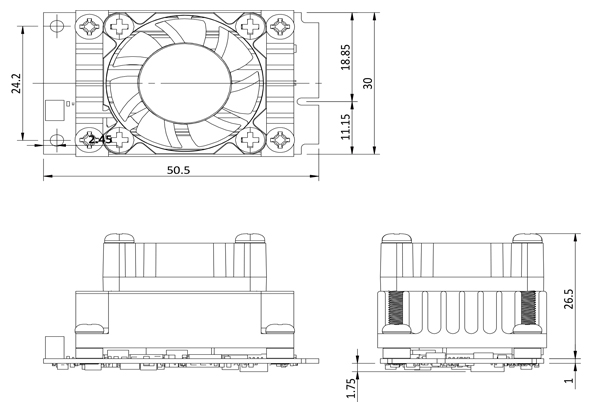

miniPCIe form factor (30 x 50 mm)

|

|

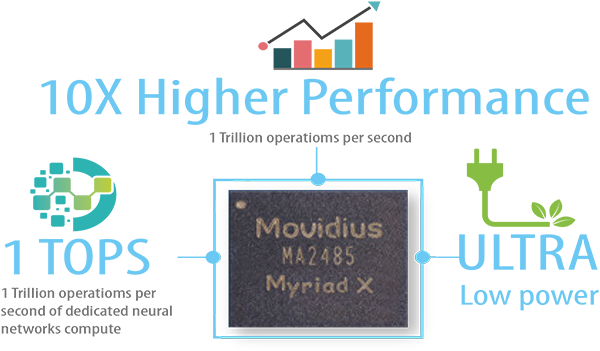

2 x Intel® Movidius™ Myriad™ X VPU MA2485

|

|

Power efficiency ,approximate 7.5W

|

|

0°C~55°C (In TANK AIoT Dev. Kit)

|

|

Powered by Intel's OpenVINO™ toolkit

|

|

.jpg) |

|

|

|

|

The Mustang-MPCIE-MX2 card included two Intel® Movidius™ Myriad™ X VPU, providing an flexible AI inference solution for compact size and embedded systems.

VPU is short for vision processing unit. It can run AI faster, and is well suited for low power consumption applications such as surveillance, retail, transportation. With the advantage of power efficiency and high performance to dedicate DNN topologies, it is perfect to be implemented in AI edge computing device to reduce total power usage, providing longer duty time for the rechargeable edge computing equipment.

|

|

Key Features of Intel® Movidius™ Myriad™ X VPU:

|

|

Native FP16 support

|

|

Rapidly port and deploy neural networks in Caffe and Tensorflow formats

|

|

End-to-End acceleration for many common deep neural networks

|

|

Industry-leading Inferences/S/Watt performance

|

|

|

|

|

|

|

|

|

| Model |

Mustang-MPCIE-MX2 |

| Main Chip |

2 x Intel® Movidius™ Myriad™ X MA2485 VPU |

| Operating Systems |

Ubuntu 16.04.3 LTS 64bit, CentOS 7.4 64bit, Windows® 10 64bit |

| Dataplane Interface |

miniPCIe |

| Power Consumption |

Approximate 7.5W |

| Operating Temperature |

0°C~55°C (In TANK AIoT Dev. Kit) |

| Cooling |

Active Heatsink |

| Dimensions |

30 x 50 mm |

| Operating Humidity |

5% ~ 90% |

| Support Topology |

AlexNet, GoogleNetV1/V2, Mobile_SSD, MobileNetV1/V2, MTCNN, Squeezenet1.0/1.1, Tiny Yolo V1 & V2, Yolo V2, ResNet-18/50/101 |

|

|

|

| Part No. |

Description |

| Mustang-MPCIE-MX2-R10 |

Deep learning inference accelerating miniPCIe card with 2 x Intel® Movidius™ Myriad™ X MA2485 VPU, miniPCIe interface 30mm x 50mm, RoHS |

|

|

|

|

|

|